If you’ve been around, you may know Elsevier for surveillance publishing. Old hands will recall their running arms fairs. To this storied history we can add “automated bullshit pipeline”.

In Surfaces and Interfaces, online 17 February 2024:

Certainly, here is a possible introduction for your topic:Lithium-metal batteries are promising candidates for high-energy-density rechargeable batteries due to their low electrode potentials and high theoretical capacities [1], [2].

In Radiology Case Reports, online 8 March 2024:

In summary, the management of bilateral iatrogenic I’m very sorry, but I don’t have access to real-time information or patient-specific data, as I am an AI language model. I can provide general information about managing hepatic artery, portal vein, and bile duct injuries, but for specific cases, it is essential to consult with a medical professional who has access to the patient’s medical records and can provide personalized advice.

Edit to add this erratum:

The authors apologize for including the AI language model statement on page 4 of the above-named article, below Table 3, and for failing to include the Declaration of Generative AI and AI-assisted Technologies in Scientific Writing, as required by the journal’s policies and recommended by reviewers during revision.

Edit again to add this article in Urban Climate:

The World Health Organization (WHO) defines HW as “Sustained periods of uncharacteristically high temperatures that increase morbidity and mortality”. Certainly, here are a few examples of evidence supporting the WHO definition of heatwaves as periods of uncharacteristically high temperatures that increase morbidity and mortality

And this one in Energy:

Certainly, here are some potential areas for future research that could be explored.

Can’t forget this one in TrAC Trends in Analytical Chemistry:

Certainly, here are some key research gaps in the current field of MNPs research

Or this one in Trends in Food Science & Technology:

Certainly, here are some areas for future research regarding eggplant peel anthocyanins,

And we mustn’t ignore this item in Waste Management Bulletin:

When all the information is combined, this report will assist us in making more informed decisions for a more sustainable and brighter future. Certainly, here are some matters of potential concern to consider.

The authors of this article in Journal of Energy Storage seems to have used GlurgeBot as a replacement for basic formatting:

Certainly, here’s the text without bullet points:

What I kinda appreciate about all this AI stuff is that people who a few years ago were convinced that postmodernism was a poison that was destroying Western civilization are now just cool with “it’s just text, bro, it’s all the same!”

I mean, what’s more postmodern than looking at some text generated by spicy autocomplete, deciding it’s just like something a human would write, and therefore the model is as intelligent as a human?

it’s fabulously postmodern in that it handily demonstrates the awful outcomes of a hegemonic modernist project

It gets better: and all of the “metamodern” post-postmodern solutions on the table revolve around making the bot “friendly”

Now with 404media coverage! https://www.404media.co/scientific-journals-are-publishing-papers-with-ai-generated-text/

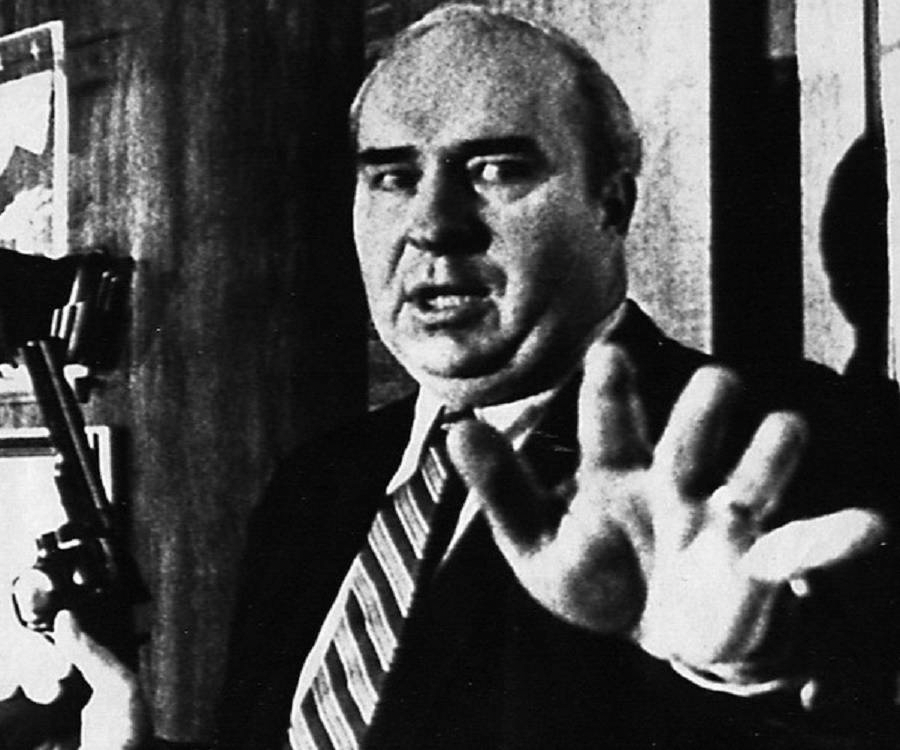

holy crap, did anyone see the 404 article on ai-generated breastfeeding photos that “mom daily” is posting in order to avoid the nudity filters at Facebook? This one appeared with hashtag #Godisgood and #jenniferlopez because no one knows the purpose of hashtags anymore I guess.

When the hashtag says “God is good” but the picture says “God is dead”

God creates three legged giant to make more laps for children to sit on? Or is that Jennifer Lopez job?

@[email protected] @skillissuer image attached for the mastodon readers. I hadn’t noticed that posts to the blog don’t keep their links or image attachments when viewed on mastodon.

https://www.404media.co/horrific-ai-generated-breastfeeding-images-on-facebook-bypass-moderation/

I love this. Looks like AI is good for something unexpected: exposing people who aren’t doing their jobs. These journals weren’t doing peer review properly/at all. I saw your comments with IEEE and the other journals, how embarrassing for them. What a great day!

spicy autocomplete

Lmao. I don’t know if you came up with this but I’m stealing it.

Spicy is one of my favourite words that’s taken on a new word sense in a slang context. It’s so versatile.

I didn’t invent it — picked it up around Mastodon somewhere.

also authors weren’t doing their job writing these papers

What a piece of shite company. Also, props to you for calling it spicy autocomplete and not

ai.Ooh I’m getting some fun results with this search: https://scholar.google.com/scholar?as_ylo=2024&q=%22certainly,+here%22±chatgpt&hl=en&as_sdt=0,22

jesus fuck it’s bad, it’s not only plagiarism machine output but also base material is crank shit

As a large language model, they’d better start citing me, I need tenure.

Let’s invite Taylor & Francis to the party. This book chapter has a “results” section that reads like the whole thing came out of GlurgeBot, with the beginning clumsily edited to hide that fact:

An AI language model do not have access to data or specific research findings. However, in a research paper on advancing early cancer detection with machine learning, the experimental results would typically involve evaluating the performance of machine learning models for early cancer detection.

Worst part - I can’t be certain whether “GlurgeBot” is a sneer or an actual product.

Oh look, here’s MDPI doing the same thing:

Certainly, here is the revised paragraph:

By solving Equation (8), …

that’s par for the course for MDPI

And Wiley:

Certainly, here are the formulas for calculating accuracy

And IEEE!

As an AI language model, I don’t have access to the specific results and findings of any particular research study. However, some general guidance is provided on how a research study should report and discuss its findings. In general, the results section of a research study should provide a clear and concise presentation of the data and findings. This can include tables, figures, and statistical analysis to support the results. The discussion section should then provide a more detailed interpretation and explanation of the results, including any limitations of the study and implications for future research.

Also this:

As an AI language model, I cannot determine how good your results are without more context.

I don’t know why the Journal of Advanced Zoology would be publishing “Lexico-Stylistic Functions of Argotisms inEnglish Language”, but there you go:

I apologize for the confusion, but as an AI language model, I don’t have access to specific articles or their sections, such as the «Introduction» section of the article «Lexico-stylistic functions of argotisms in the English language». I can provide you with a general outline of what an introduction section might cover in an article on this topic

Just now I tried to find what an argotism is and the top result was that very paper.

The Oxford English Dictionary defines argot as “The jargon, slang, or peculiar phraseology of a class, originally that of thieves and rogues.” It is attested as long ago as 1860 and was apparently borrowed from French, but its history beyond that point is unknown.

the more you know.gif(Our university library subscribes to the OED, and by Gad I’m going to get their money’s worth.)

Huh, so something like a cant then (and indeed Wiktionary lists it as a synonym)

I still doubt “argotism” (as far as it’s a word in actual use at all) is countable.

I understand very well that publishers are fucking leeches that contribute nothing to the scientific process, but it’s still weird to me that this is extremely widespread but there’s no controversy about it. like, there’s an outright refusal to fix these things during peer review when flagged, and there are no consequences for authors using LLMs to generate absolute bullshit and get it published. like fuck me, college kids get a harsher punishment when they get caught using the fancy plagiarism machine.

aren’t these the exact ingredients you need for a scientific crisis, specifically one that achieves the fascist goal of destroying the public’s trust in science? is there a bunch of backlash I’m missing because I’m very sorry, but as an AI language model, I don’t have access to the mailing lists where “the scientists with the largest hadrons to collide” call other scientists “trifling but with many more words”

oh there’s a massive controversy about it. The Chronicle of Higher Ed’s been super mad, Retraction Watch has been on fire, and every librarian group text I’m on is sending around enraged examples every day. But the barrel over which the big scholarly publishers have academia is a difficult one to address without cooperation from professors – and this system is *working* for professors.

But yeah, there’s a huge scandal, just … not one that’s changing anything.

I wonder what percentage of fraudulent AI-generated papers would be discovered simply by searching for sentences that begin with “Certainly, …”